Table of Contents

The Drafting Table Is Dead. The Algorithm Has the Pen.

You are already 3 project cycles behind — not because your team lacks talent, but because the practice still runs on a workflow designed for drafting tables, not data streams. The global construction industry loses an estimated $1.6 trillion annually to rework, coordination failures, and late-stage design changes. Trace most of those failures back to their source and you find the same root cause: the disconnection between ideation, environmental simulation, and digital fabrication. The new generation of AI architecture design tools architects use in 2026 is built to close that gap — not partially, but structurally.

In Rotterdam, the municipality’s Climate Resilient Design Initiative now mandates that any public-sector building above 2,000 m² must produce a full lifecycle carbon analysis before permit approval. Practices that cannot generate that analysis computationally — inside their design environment, not exported to a consultant’s spreadsheet — are simply not shortlisted. This is not a future scenario. It is a procurement condition that took effect in Q1 2025. The gap between firms operating integrated AI design pipelines and firms still running serial handoffs is no longer a competitive advantage. It is an eligibility threshold.

The same pressure is playing out differently in Singapore, where the Building and Construction Authority’s CORENET X platform began processing AI-validated permit submissions in 2024, cutting standard residential plan-check cycles from an average of 34 days to under 72 hours for code-compliant submissions. The firms equipped to submit into that system are capturing procurement cycles that others are structurally excluded from. The AI architecture design tools shaping this new competitive landscape are not experimental. They are operational, measurable, and already deciding which practices get shortlisted.

Nuvira Perspective: Human-Machine Synthesis as Design Doctrine

At Nuvira Space, we do not treat AI as a rendering accelerator or a documentation shortcut. Our position is more precise: AI architecture design tools are the connective tissue between the architect’s spatial intuition and the structural, climatic, and material systems that must ultimately carry the building. We call this human-machine synthesis — a design posture where the architect sets the performance constraints, defines the spatial intent, and interprets the outputs, while algorithmic engines execute the combinatorial problem-solving that no human team can resolve at the required resolution, within a viable project timeline.

The practices pulling ahead in 2026 are not the ones with the most software subscriptions. They are the ones that have rebuilt their workflow logic around feedback loops — where geometry, structure, energy performance, and material carbon are recalculated in real time as a design decision is made, not after it is committed. A floor-to-floor height increase of 400 mm is not just a spatial choice. In an integrated pipeline, it triggers a simultaneous recalculation of structural steel tonnage, annual heating demand, glazing solar gain, and construction cost. The architect sees all 4 consequences before confirming the decision. That is the operational difference this article unpacks.

This is not about replacing the architect’s judgment. It is about giving that judgment an instrument panel it has never had access to before.

Technical Deep Dive: The Stack That Runs the Next Firm

Generative Design: From Drawing to Defining

Traditional architectural design begins with a spatial hypothesis — a sketch, a section, a massing study. Generative AI inverts that logic entirely. You define performance constraints first: floor plate efficiency above 78%, structural span no greater than 14 m, glazing ratio between 30% and 45% per facade, embodied carbon below 350 kgCO₂e/m², net lettable area above 4,200 m². The algorithm then generates thousands of schema variants against those constraints simultaneously and ranks them by how well they satisfy the full constraint set — not one variable in isolation, but all of them in conflict with each other, which is precisely the condition real design operates in.

The output is not a finished design. It is a performance-ranked design space — a field of viable possibilities, each carrying its own structural logic, spatial quality, daylighting profile, and carbon footprint already calculated. Your role is to navigate that field intelligently, applying spatial judgment, contextual reading, and programmatic nuance that the algorithm cannot weigh. This is not faster sketching. It is a fundamentally different cognitive task, and it produces fundamentally different architecture. For a deeper grounding in how this methodology is reshaping practice, see Nuvira Space’s analysis of generative AI in architecture.

Generative Engine Specifications — Current Platforms, 2026

- Constraint resolution: up to 47 simultaneous parametric variables per generation cycle

- Schema output per run: 800–12,000 variants depending on complexity tier and computational budget

- Structural integration: real-time Finite Element Analysis (FEA) with mesh resolution down to 25 mm

- Carbon calculation engine: aligned with EN 15978 lifecycle assessment boundary definitions

- Iteration cycle time: 4–18 minutes for a mid-complexity mixed-use floor plate on a 16-core workstation

- Multi-objective Pareto optimisation: ranks variants across 3+ competing objectives simultaneously

Digital Fabrication Integration: Where the Model Becomes the Factory

The most consequential performance gain from AI-integrated design tools in 2026 is not visualisation speed or documentation efficiency. It is fabrication fidelity — the degree to which the design model can communicate directly with manufacturing equipment, bypassing the traditional shop drawing translation layer entirely. Modern CNC mills, 6-axis and 8-axis robotic arms, and large-format 3D concrete extrusion systems can now receive toolpath data derived directly from a parametric model. The human decision point is the geometry and the material specification. The conversion from design intent to machining instruction is automated and verifiable.

In practice, a bespoke steel junction node for a diagrid facade can be modelled in Grasshopper with Karamba3D structural validation, exported as a machining file, and cut on a 5-axis CNC within the same working session. On more complex projects, 6-axis and 8-axis robotic arms are used for on-site concrete extrusion, bespoke formwork fabrication, and complex timber joinery — tasks that previously required weeks of manual detailing translated through multiple contractors.

The Robots plugin for Grasshopper supports direct KUKA, ABB, and Fanuc arm programming, meaning the fabrication simulation and the design model share the same parametric geometry. A geometry change in the design model propagates to the fabrication simulation automatically. Nuvira Space’s technical overview of robotic fabrication in architecture covers the hardware-software integration layer in detail.

Digital Fabrication Specifications

- Robotic arm compatibility: 6-axis and 8-axis arms (KUKA KR 10 R1100-2, ABB IRB 6700, Fanuc M-20iD); direct GCode and RAPID script export

- Concrete print layer resolution: 12–18 mm per pass with current large-format extrusion heads; structural use limited to non-loadbearing applications until 2026 code updates

- CNC tolerance: ±0.1 mm on 5-axis steel and timber CNC operations

- Full pipeline: Rhino 8 → Grasshopper → Karamba3D → Robots plugin → direct machine upload (zero manual data re-entry)

- Mass timber joinery: direct export to Hundegger K3 and Weinmann WMP 150 joinery machines from parametric timber models

- On-site robotic concrete: Contour Crafting layer bond strength reaches 85–92% of cast-in-place compressive strength at current calibration

Real-Time Environmental Simulation: Designing at the Speed of Climate

A building’s energy performance is determined in the first 20% of design decisions — orientation, massing, floor-to-floor height, envelope depth, glazing distribution. Yet traditional practice runs energy simulation as a late-stage validation check, typically at 60–80% design completion, when fundamental parameters are locked and any significant change carries a cost penalty. This is a structural workflow problem, not a competence problem. AI-embedded simulation tools resolve it by running a continuous, live energy model as you design.

Cove.tool, integrated with Revit and Rhino, processes an energy Use Intensity (EUI) iteration in under 90 seconds for a typical floor plate using the EnergyPlus simulation engine. Autodesk Forma runs site-level wind comfort analysis using Lawson criteria and solar radiation mapping in the browser, with no simulation setup or specialist software configuration. The architectural consequence is significant: climate performance becomes a design generator, not a post-design audit. Building orientation, envelope articulation, massing setbacks, and overhang depths are driven by quantified environmental outcomes before they are driven by aesthetic convention.

Environmental Simulation Metrics

- EUI iteration speed: under 90 seconds per run (Cove.tool + EnergyPlus engine)

- Daylight Autonomy (DA): calculated per IES LM-83-12 standard within the model environment

- Annual Solar Exposure (ASE): automated flag for overheating risk zones above threshold

- Wind comfort: Lawson pedestrian comfort criteria assessed in Forma at site scale in under 4 minutes

- Thermal mass modelling: dynamic simulation with 1-hour timestep resolution

- Carbon tracking: EC3 material database integration — 250,000+ verified EPD records accessible within design environment

BIM Coordination and Real-Time Clash Detection

Building Information Modelling platforms in 2026 have moved beyond static clash detection reports generated overnight. Autodesk Revit with Dynamo scripting, combined with Navisworks Clash Detective API integration, now allows live multi-discipline coordination within the same federated model. Structural steel conflicts with MEP (mechanical, electrical, plumbing) routing are flagged as geometry is placed, not after the fact. The cost of a coordination clash resolved at design stage is approximately 1:10:100 relative to the same clash resolved at tender, construction, or post-completion. AI clash prediction tools, now embedded in Revit and ArchiCAD, pre-flag high-probability conflict zones before the conflicting element is even placed, based on pattern recognition from previous projects.

- Federated model format: IFC 4.3 (Industry Foundation Classes) — current interoperability ceiling

- AI clash prediction accuracy: 76–84% on standard MEP-structure conflicts in tested deployments (Autodesk AEC Collection benchmark, 2024)

- Real-time sync: Speckle achieves sub-5-second model synchronisation across Revit, Rhino, and Grasshopper simultaneously

- Version control: full parametric versioning with branch-and-merge logic analogous to software development Git workflows

Comparative Analysis: AI-Integrated Pipeline vs. Industry Standard Workflow

Industry Standard: The Serial Model and Its Structural Costs

The conventional architectural workflow is serial by organisational design: concept → schematic design → design development → documentation → specialist coordination → fabrication tender. At each stage transition, information is re-entered, re-checked, and re-validated by a different discipline operating in a different software environment. Structural engineers receive a model and re-model it independently. Energy consultants receive drawings and construct a separate simulation model from scratch. Contractors receive documentation and re-price it against their own cost assumptions. Every handoff accumulates error, information loss, and time.

The McKinsey Global Institute’s 2024 construction productivity analysis puts average rework rates in this workflow at 12–15% of total project cost. Average design-to-permit timeline for a complex civic building in Western Europe or North America: 26–34 months. Average cost variance between design-stage estimate and construction tender: ±11.7%. These are not outlier numbers. They are the documented performance of the industry standard.

AI-Integrated Pipeline: The Parallel Model

In a fully integrated AI design pipeline, structural analysis, environmental simulation, and cost modelling run concurrently within the same parametric model environment. Decisions are not handed off between disciplines — they are shared in real time, with consequences visible to all parties before the decision is confirmed. A structural engineer working in the same Speckle-linked model sees a massing change the moment the architect makes it. The energy model recalculates. The cost engine updates the elemental rate build-up. The design team does not discover the consequences of a decision 6 weeks later in a coordination meeting.

Measured outcomes from practices operating fully integrated pipelines, sourced from the AIA’s Practice Innovation Reports:

- Design-to-permit timeline reduction: 28–41% on complex projects

- Construction coordination RFIs: reduced by 34% on average versus serial-workflow comparables

- Embodied carbon reduction attributable to early-stage simulation: 18–27%

- Cost variance at construction tender versus design-stage estimate: ±4.2% versus industry standard ±11.7%

- Post-completion defects: 23% reduction on projects with full digital fabrication pipeline integration

The Honest Caveat: Where the Parallel Model Breaks Down

The parallel model requires a level of discipline-wide BIM adoption and data protocol agreement that most project teams in 2026 have not yet achieved. The weakest link in any federated model is the consultant who still works in 2D or exports poorly structured IFC files. The AI pipeline is only as integrated as its least-integrated participant. On projects where 1 or more consultants cannot operate within a shared model environment, the efficiency gains are substantially reduced — and the coordination overhead of managing incompatible data streams can exceed the gains from the AI tools themselves.

Speculative / Internal Concept Study — The Mireille House by Nuvira Space

Project Overview — Location / Typology / Vision

Location: Greater Copenhagen Metropolitan Region, Denmark | Typology: Net-positive energy single-family residence | Site area: 620 m² | Built area: 187 m²

Copenhagen’s residential building code, updated in January 2023, mandates a primary energy demand below 20 kWh/m²/year for all new residential construction — one of the most stringent energy thresholds in the European Union. The Mireille House treats this constraint not as a regulatory hurdle but as the primary generator of the building’s form, orientation, and material logic. Every visible architectural decision in this concept study is traceable to a computational performance argument. Nothing is aesthetically arbitrary.

The concept operates at the intersection of passive house principles, parametric structural rationalisation, and digital fabrication. It is designed to be constructible by a practice of 5–8 architects using only the tools described in this article — no specialist computational consultants, no separate simulation team, no fabrication detailer. The entire pipeline from schematic design to fabrication output runs within a single integrated environment.

Design Levers Applied

Form Generation and Orientation Optimisation

- Tool: Rhino 8 + Grasshopper + Ladybug Tools

- 1,847 massing variants generated against simultaneous solar access, wind pressure coefficient, and spatial efficiency constraints

- Final form selected at variant #1,204: roof pitch of 17.3° — optimised for photovoltaic yield at 55.7°N latitude (Copenhagen)

- South facade glazing ratio: 38.4% — calculated against summer overheating risk (UTCI > 28°C hours/year) and passive solar heating balance in winter

- Building orientation: 14° east of true south — the optimal deviation for Copenhagen’s prevailing morning cloud patterns and afternoon solar access

- Floor plan geometry: rectilinear with 3 internal courtyard apertures of 2.4 m × 1.8 m each — daylight distribution without thermal bridging at perimeter

Structural Rationalisation — Cross-Laminated Timber

The structural system uses 5-layer Cross-Laminated Timber (CLT) panels throughout, selected over steel and concrete on the basis of embodied carbon performance. For a detailed comparison of structural material options at this scale, see Nuvira Space’s technical breakdown of cross-laminated timber vs. mass timber.

- Tool: Karamba3D within Grasshopper for structural optimisation

- CLT panel layout optimised for 6.4 m clear spans — 0% intermediate columns across all primary living spaces

- Panel thickness: 180 mm (5-layer, L-L-L-L-L configuration) — validated against live load of 2.5 kN/m², snow load of 1.0 kN/m² (Copenhagen characteristic value), and wind load of 0.56 kN/m²

- Fabrication output: direct Hundegger K3 CNC cutting files — 0 traditional shop drawings produced in this phase

- Connection system: exposed dowel-laminated joints, 48 mm diameter, designed for full disassembly without material degradation

- Material passport: each panel assigned a unique digital identifier linking to EPD data, timber origin certification, and disassembly protocol

Environmental Performance Targets

- Tool: Cove.tool + OpenStudio EnergyPlus engine + Ladybug Honeybee for daylighting

- Predicted primary energy demand: 17.8 kWh/m²/year — within Copenhagen 2023 code threshold of 20 kWh/m²/year

- Net energy balance: +2.1 kWh/m²/year (net-positive) — PV array of 34.6 m² on south-facing roof yields surplus above consumption

- Daylight Autonomy (DA 300 lux): 74% of occupied floor area meets threshold — above EN 17037 minimum recommendation of 50%

- Embodied carbon: 187 kgCO₂e/m² — 46% below Danish national residential average of 345 kgCO₂e/m²

- Predicted Mean Vote (PMV): −0.3 to +0.2 across all occupied seasons (within ASHRAE 55 neutral comfort band)

- Annual CO₂ sequestration in CLT structure: estimated 28.4 tCO₂ held in biomass over 50-year building lifespan

Transferable Takeaway

The Mireille House demonstrates a single principle with wide application: when form, structure, and environmental performance are co-optimised from the first generative iteration, the building does not compromise aesthetics for performance — it derives its aesthetics from performance logic. The 17.3° roof pitch is not a stylistic signature. It is the geometric solution to a PV yield equation at 55.7°N latitude. The 38.4% south glazing ratio is not an aesthetic preference. It is the calculated balance point between passive winter gain and summer overheating risk. The 14° eastward rotation is not arbitrary — it is the optimal angle for Copenhagen’s cloud patterns as modelled across a 30-year TMY (Typical Meteorological Year) dataset.

Every visible decision carries a quantified argument. That is the spatial quality that AI-integrated design makes available at a level of rigour previously confined to research institutions and high-budget practices. The Mireille House concept demonstrates it is achievable with a 5-person team and commercially available tools.

Intellectual Honesty: Current Limitations You Need to Know

AI architecture design tools in 2026 are genuinely transformative. They are also genuinely incomplete in specific, important ways. Restructuring your practice workflow around these tools without understanding where their ceilings are is how you build dependencies on systems that will fail you at the wrong moment.

- Contextual and cultural judgment: No current generative system can weigh neighbourhood character, cultural memory, the phenomenological quality of a spatial threshold, or the social dynamics of a community brief the way an architect with site knowledge can. The tools optimise for measurable constraints. The unmeasurable — the thing that makes a building belong to its place — remains entirely the architect’s domain.

- Data dependency and input quality: Forma, Cove.tool, and Ladybug are only as accurate as their underlying climate datasets and material databases. Proprietary, regional, or non-standard materials often require manual EPD data entry, which reintroduces the same error risks as traditional workflows. Garbage-in, garbage-out applies at exactly the moment when design confidence is highest.

- On-site fabrication gap: Direct model-to-machine pipelines work with high reliability for timber and steel in controlled factory environments. On-site robotic fabrication — concrete extrusion, automated rebar placement, modular panel assembly — still carries a reliability, cost, and calibration gap that limits viable application to high-budget or publicly-funded research projects in 2026. The factory-site interface remains the weakest link in the digital fabrication chain.

- IFC interoperability ceiling: IFC 4.3 schema limitations mean that a fully parametric Grasshopper model cannot transfer its complete associativity into a BIM coordination platform without some degree of manual rework. The open-standards file format problem is not solved in 2026. Proprietary pipelines (Autodesk-to-Autodesk, for example) work more cleanly but create platform lock-in.

- Skill overhead and transition cost: The practices reporting the highest performance gains from AI tools have also invested 6–18 months in structured retraining, workflow redesign, and protocol standardisation. The tools do not produce value passively. They require a new architectural literacy — one that spans geometry, data structures, environmental physics, and fabrication logic simultaneously.

- Liability and professional accountability: AI-generated structural recommendations require licensed engineer review and stamp. AI-generated energy compliance outputs require building physics verification before submission. The algorithm does not carry professional indemnity insurance. You do.

2030 Future Projection: What the Next 4 Years Rewire

The transition from AI-assisted to AI-native practice will be effectively complete in most high-performing firms by 2028–2029. The following projections are based on current development trajectories from Autodesk, McNeel, Thornton Tomasetti’s CORE studio, and academic research programmes at ETH Zurich’s Digital Building Technologies group.

- Autonomous structural pre-validation (2027): Karamba3D and competing structural AI tools will close the performance gap with standalone FEA platforms (ETABS, SAP2000) for standard typologies. Architects will self-perform L2 structural validation on residential and mid-rise commercial projects — reducing the engineer’s role in early-stage massing decisions by an estimated 60% and compressing schematic structural coordination from weeks to hours.

- Whole-life cost integration (2027–2028): Real-time cost engines will calculate 50-year lifecycle cost (not only capital construction cost) alongside embodied carbon, making CAPEX-versus-OPEX trade-offs visible at schematic design stage — before structural or envelope systems are locked.

- Structural-grade on-site concrete printing (2028): Large-format concrete extrusion will reach ±6 mm tolerance by 2028 (from current ±15–20 mm), making it viable for loadbearing wall applications in low-rise construction. This closes the last major gap in the digital fabrication pipeline for residential and low-rise commercial typologies.

- Regulatory pre-validation (2026–2028): AI permit validation engines, piloted in Singapore’s CORENET X and Australia’s NCC Digital Code initiative, will pre-validate building code compliance within the design model before formal submission — collapsing the current 4–8 week plan-check cycle to under 72 hours for standard typologies. Rotterdam and Amsterdam have announced equivalent pilot programmes for 2026–2027.

- Digital material passports at scale (2028–2030): Every structural and envelope component in a new building will carry a digital material passport encoding its EPD data, fabrication origin, connection method, and disassembly protocol. At end of building life, automated disassembly planning will calculate the recoverable material value and feed it directly into the next project’s LCA database — closing the circular construction loop computationally.

- AI design agents (2028–2030): The next generation beyond current generative tools will be autonomous design agents capable of iterating across multiple design sessions, retaining project-specific learning between runs, and proposing solutions that account for regulatory, structural, and financial constraints without human re-entry of parameters. ETH Zurich’s current research into reinforcement learning for spatial layout optimisation is the clearest signal of this trajectory.

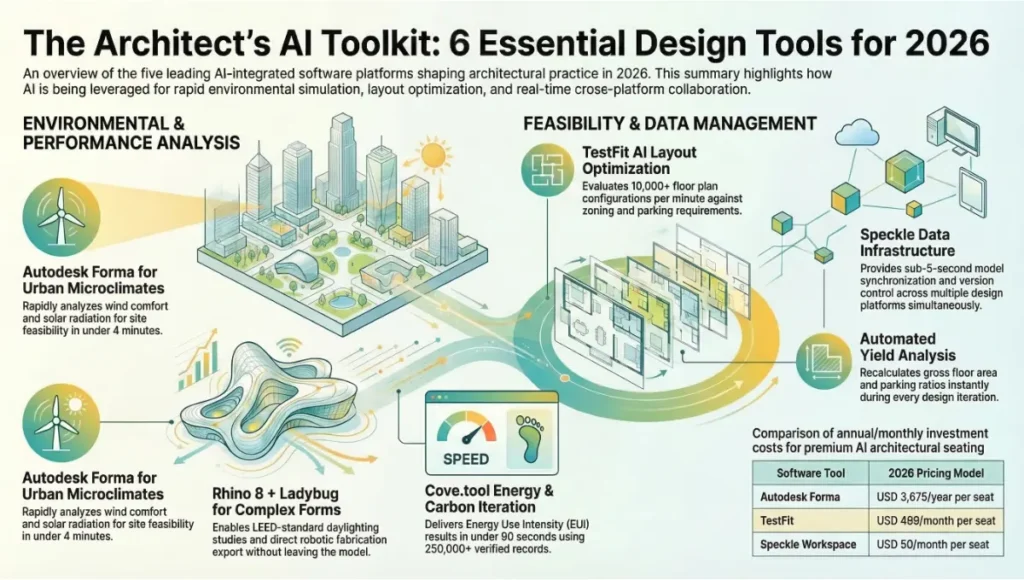

The Toolset: 5 Key AI Architecture Design Tools Architects Use in 2026

1. Autodesk Forma

- Function: Early-stage urban and site analysis — solar radiation, wind comfort, daylight, microclimate, shadow

- Integration: Revit plugin; Rhino via Forma API; browser-native for site studies

- Key metric: Lawson pedestrian wind comfort analysis across a full development site in under 4 minutes

- Key metric: Solar access analysis aligned with EN 17037 daylight provisions

- Best for: Site feasibility studies, masterplan massing iterations, pre-permit climate compliance checks

- Pricing model: Subscription via Autodesk AEC Collection (2026 rate: USD 3,675/year per seat)

2. Rhino 8 + Grasshopper + Ladybug Tools

- Function: Full parametric design environment with embedded environmental simulation and fabrication output

- Key plugins: Ladybug (climate analysis), Honeybee (EnergyPlus + Radiance simulation), Karamba3D (structural FEA), Robots (robotic arm programming), Human (data management)

- Key metric: Full DA/ASE daylighting study to LEED v4.1 standards within the model — no export required

- Key metric: Direct fabrication file export to CNC and robotic arm controllers from parametric geometry

- Best for: Complex and performance-driven form, high-specification residential, research-led practice, digital fabrication projects

- Pricing: Rhino 8 USD 995 perpetual; Ladybug Tools open-source; Karamba3D USD 1,290/year

3. Cove.tool

- Function: Rapid energy performance and embodied carbon analysis for early-stage and schematic design

- Integration: Revit, SketchUp, Rhino; CSV import for non-BIM workflows

- Key metric: Energy Use Intensity (EUI) iteration in under 90 seconds; full ASHRAE 90.1 baseline comparison

- Key metric: EC3 material database integration — 250,000+ verified Environmental Product Declaration records

- Best for: Practices tracking LEED v4.1, BREEAM, NABERS, or national energy code compliance from project day 1

- Pricing: USD 299/month per seat (team pricing available at 5+ seats)

4. TestFit

- Function: AI-driven unit layout optimisation and programme feasibility testing for residential and mixed-use typologies

- Key metric: 10,000+ floor plan layout configurations tested per minute against zoning envelope, programme brief, and parking requirements simultaneously

- Key metric: Automated yield analysis — gross floor area, net sellable area, parking ratio — recalculated per iteration

- Best for: Multi-family residential developers, housing-focused practices, feasibility-stage briefing and programme negotiation

- Pricing: USD 499/month per seat

5. Speckle

- Function: Open-source data infrastructure for AEC — real-time model synchronisation and version control across platforms

- Integration: Revit, Rhino, Grasshopper, Blender, Unity, ETABS, SAP2000, web viewer

- Key metric: Sub-5-second model sync across platforms; full geometry and parameter versioning with branch-and-merge

- Key metric: Complete audit trail of all model changes across all disciplines, all platforms, throughout project lifecycle

- Best for: Multi-discipline project teams that need 1 live federated model rather than multiple manually-exported snapshots

- Pricing: Open-source self-hosted (free); Speckle Workspace cloud USD 50/month per seat

Comprehensive Technical FAQ

Q: Do I need computational design training to implement these tools?

A: Not for all of them. Forma, Cove.tool, and TestFit are browser-based interfaces requiring no scripting knowledge — a licensed architect can be productive within a half-day of orientation. Rhino 8 + Grasshopper requires a structured learning investment of approximately 80–120 hours to reach production-level competence. Ladybug Tools and Karamba3D require an additional 40–60 hours each for reliable independent use. The correct approach is to match tool selection to current team competency and project typology, then build capacity progressively across 2–3 project cycles rather than attempting full-stack adoption simultaneously.

Q: How do I handle heritage or complex planning conditions that AI tools cannot read?

A: This is a genuine limitation that the field has not solved. Tools like Forma use generic zoning envelopes. For listed buildings, conservation areas, heritage overlay zones, or sites with non-standard planning conditions, automated code compliance checking is unreliable and should not be trusted without specialist human review. Singapore’s CORENET X system covers standard residential and commercial typologies. Rotterdam’s emerging permit AI covers standard zoning classes. For complex planning contexts, use AI tools for performance optimisation within a manually defined design envelope, and retain specialist planning consultants for regulatory navigation.

Q: What carbon reductions are realistically achievable with these tools versus standard practice?

A: Peer-reviewed data from the Carbon Leadership Forum’s 2024 Buildings Embodied Carbon Report documents the following ranges for practices using integrated early-stage LCA versus post-design LCA:

- Structural system optimisation: 8–14% embodied carbon reduction from eliminating structural over-specification

- Material substitution (CLT vs. reinforced concrete): 35–55% embodied carbon reduction on residential typologies

- Facade and envelope optimisation: 6–11% from glazing ratio and solar control specification

- Total early-stage integration benefit: 18–34% versus post-design LCA baseline

The upper end of that range requires material substitution latitude. The lower end is achievable through structural and envelope optimisation alone, without changing primary material systems.

Q: Can AI tools replace structural engineers or energy consultants?

A: No — and this framing misrepresents the actual value. The correct framing is: AI tools allow the design team to arrive at specialist consultant coordination with pre-validated assumptions and quantified options, rather than blank-slate questions. The structural engineer’s role shifts from generating baseline options to refining a field of already-viable structural configurations. The energy consultant’s role shifts from running the first simulation to interpreting and applying a continuous stream of simulation data already embedded in the design model. Both roles remain essential. Their engagement point and the value they add in that engagement changes significantly.

Q: What hardware specification do I need for production-level use?

- CPU: AMD Ryzen 9 7950X (16 cores / 32 threads) or Intel Core i9-13900K (24 cores) — parallel simulation runs require maximum core count

- RAM: 64 GB DDR5 minimum for complex Grasshopper + Karamba3D models; 128 GB for large federated BIM coordination models

- GPU: NVIDIA RTX 4080 16 GB or RTX 4090 24 GB for real-time rendering within Enscape, D5 Render, or Lumion

- Primary storage: 2 TB NVMe SSD (PCIe 4.0 minimum) for active project files

- Network storage: NAS with 10 Gb/s LAN connection and automated Speckle versioning for team model sharing

- Cloud compute: AWS EC2 C6i or Azure HPC instances for overnight generative optimisation runs above 5,000 variants — local hardware is not cost-effective at that scale

Q: How do I start if I have no AI tools in my current workflow?

A: Start with 1 project and 1 tool. The recommended entry sequence for a practice with no existing computational design capability:

- Month 1–2: Deploy Forma on your next site feasibility study. Measure how long the first wind and solar analysis takes versus your current process.

- Month 2–4: Add Cove.tool to your next schematic design phase. Run an EUI comparison across 3 massing variants. Use the output in your next client design presentation.

- Month 4–8: Begin Grasshopper training for 1 team member on a project with genuine form-finding complexity.

- Month 8–18: Build the full Rhino + Grasshopper + Ladybug + Karamba3D stack on a project where structural and environmental performance are primary drivers.

The compounding effect across 3–4 project cycles is not incremental. Each project trained on the integrated pipeline becomes faster and more rigorous than the one before it.

Your Practice Has One Cycle to Make This Shift

The Rotterdam permit threshold. The Copenhagen energy code. The Singapore CORENET X pre-validation system. These are not signals of a future disruption — they are the operating conditions of the present practice environment. The practices being shortlisted in 2026 are the ones that can demonstrate computational fluency in their fee proposals and their preliminary design submissions, not just in their project portfolios.

At Nuvira Space, we work with practices at every stage of this transition — from running a first Forma site analysis to deploying a fully integrated parametric fabrication pipeline across a complete project lifecycle. The entry point does not need to be ambitious. It needs to be real and it needs to be now. Run Forma on your next site feasibility. Put Cove.tool inside your next schematic design phase. Measure the change in iteration velocity and design confidence over 2 project cycles. The evidence compounds.

The practices that will set the standard of the next decade are not waiting for the tools to mature. They are building literacy in the tools that exist today, because the standards — regulatory, procurement, and client — are already demanding it. The algorithm has the pen. The question is whether you are holding it.

© Nuvira Space All rights reserved. | Future Tech Series | All specifications cited are based on peer-reviewed LCA data (Carbon Leadership Forum Buildings Embodied Carbon Report 2024), IPCC AR6 Working Group III, IEA Global Status Report 2023, EN 15978 lifecycle assessment methodology, ASHRAE 90.1 and ASHRAE 55 standards, IES LM-83-12 daylighting protocol, and verified project documentation from cited municipal programmes (Rotterdam Climate Resilient Design Initiative; Singapore BCA CORENET X). Hardware pricing and software subscription rates reflect verified vendor pricing as of Q1 2026. The Mireille House is a speculative internal concept study and does not represent a completed project.